April 6, 2026 – By Tech Insights Team Imagine asking your computer buddy for homework help or business ideas, only to learn the company behind it says, "Hey, this is just for laughs. Use it at your own risk." That's exactly what happened this week with Microsoft Copilot AI. The news spread like wildfire on social media and tech sites, leaving many wondering if their favorite AI helper is more toy than tool.

If you've been using Copilot on your Windows PC, in Microsoft 365, or on the web, you might feel surprised. Microsoft has been pushing this AI hard as your everyday smart assistant. But its own rules tell a different story. Let's break it all down in simple words, step by step, so even a curious 12-year-old can follow along. We'll explore what Microsoft really said, why it matters, and what you should do next.

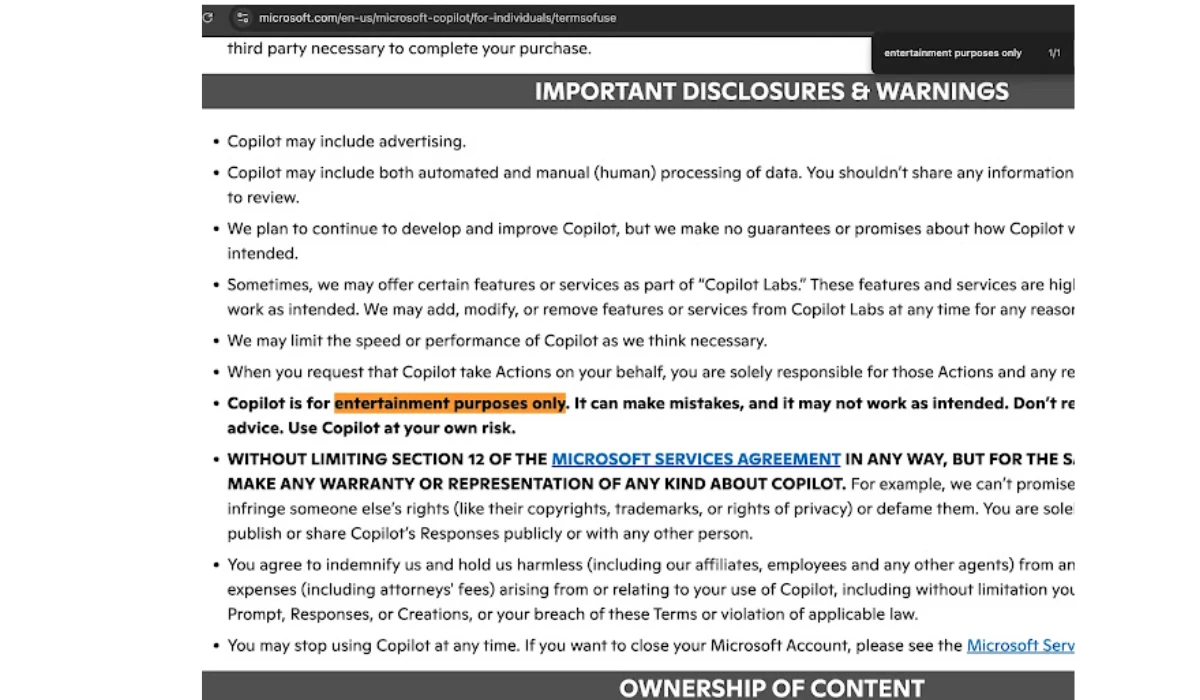

What Microsoft Actually Wrote About Copilot AI Being For Entertainment Purposes Only

Right there in the official Copilot Terms of Use, under a bold section called "IMPORTANT DISCLOSURES & WARNINGS," Microsoft spells it out clearly. The exact words are: "Copilot is for entertainment purposes only. It can make mistakes, and it may not work as intended. Don’t rely on Copilot for important advice. Use Copilot at your own risk."

This isn't some hidden fine print. It's in the document that became effective on October 24, 2025. The page also adds that Microsoft makes no promises about Copilot being perfect. It might create content that steps on someone else's copyright or privacy rights, and you – not Microsoft – are fully responsible if you share it.

Other parts of the terms remind users: Always check Copilot's answers before you act on them. Sources it pulls from the internet can be wrong or outdated. Sounds like good advice, right? But the "entertainment only" line hit hard because it feels so casual for such a powerful tool.

How This Bombshell News Surfaced in April 2026

The disclaimer didn't just appear yesterday. It sat quietly in the terms for months. Then, in the first week of April 2026, tech fans and journalists started noticing it. Sites like Tom's Hardware, PCMag, and TechCrunch picked it up, and suddenly everyone was talking.

People shared screenshots online. Some joked it was like buying a fancy car and reading in the manual, "This is just for show – don't actually drive it." Others felt it explained why Copilot sometimes gives weird or wrong answers. By April 5 and 6, the story was everywhere, from India Today to Gizmodo.

Microsoft's Quick Response: "It's Old Language – We'll Fix It Soon"

Microsoft didn't stay silent. A company spokesperson told PCMag that the "entertainment purposes" phrase is leftover "legacy language" from when Copilot first started as a simple search helper in Bing back in 2023. "As the product has evolved, that language is no longer reflective of how Copilot is used today and will be altered with our next update," they said.

So, no big panic from Redmond. They're planning a refresh. But until then, the warning stays. This quick reply shows Microsoft knows the wording doesn't match their big marketing push for Copilot as a serious productivity partner.

The Big Irony: Microsoft Pushes Copilot Hard For Work, Yet Warns It's Just Entertainment

Here's what makes this story so interesting. Microsoft spent years and billions telling everyone Copilot is the future. You see it built into Windows 11, Office apps, Edge browser, and even special "Copilot+ PCs" with fancy AI chips. They charge $20 a month for Copilot Pro and up to $30 per user for business versions in Microsoft 365.

Companies use it for writing emails, analyzing data, creating presentations, and coding. Students rely on it for essays. Yet the terms say don't trust it for "important advice." It's like a doctor handing you medicine and whispering, "But maybe don't take it too seriously."

This isn't the first time AI makers added safety nets. OpenAI, Google, and xAI do something similar. They know these tools can "hallucinate" – that's when the AI confidently makes up facts that sound real but aren't. Training on huge piles of internet data means mistakes slip in.

A Quick Trip Back: How Copilot AI Grew From Bing Chat To Everyday Helper

Copilot started life in February 2023 as Bing Chat, powered by OpenAI's tech. Microsoft rebranded it and rolled it out everywhere. By 2024, Copilot+ PCs arrived with on-device AI that works faster and privately. Features exploded: image creation, voice chat, even "Actions" where it can book flights or send emails for you (with your okay, of course).

But growth came with growing pains. Users reported wrong answers, biased results, or weird behaviors. Microsoft kept improving it, adding better safeguards. Still, the core warning remained in the terms as a legal shield.

Why AI Like Copilot Can Make Mistakes – Explained Simply

Think of Copilot as a super-smart student who read every book in the library but sometimes mixes up details. It predicts the next word in a sentence based on patterns. No real brain, no true understanding – just math on steroids.

Examples people share: It once invented fake court cases or gave bad medical tips. That's why the terms say check everything. For fun chats about movies or jokes? Perfect. For school tests or doctor visits? Double-check with real sources.

What This Means For You: Everyday Users, Students, And Businesses

Regular folks using the free version on copilot.microsoft.com should treat it like a brainstorming buddy. Great for ideas, summaries, or creative writing. But always verify facts.

Parents and kids: Fun for homework sparks, but not the final answer. Teachers already warn students about AI cheating – this disclaimer adds weight.

Businesses paying big bucks: The enterprise version has extra controls, but the individual terms still apply in spirit. Many companies add their own rules: "Use Copilot outputs only after human review."

The disclosure protects Microsoft from lawsuits if someone acts on bad advice and something goes wrong. Smart move in our lawsuit-happy world.

How Other AI Companies Handle The Same Issue

You're not alone. ChatGPT's terms say similar things – outputs may be inaccurate, don't use for high-stakes stuff. Google's Gemini and xAI's Grok echo the same caution. It's industry standard because AI isn't perfect yet. The difference? Microsoft shouts loudest about Copilot being "your AI companion" in ads and keynotes.

Smart Tips To Use Microsoft Copilot Safely In 2026

Want to keep enjoying Copilot without worry? Here are easy, practical steps anyone can follow:

- Treat it as a starting point. Use it for rough drafts or fun facts, then fact-check with trusted sites like Wikipedia, official government pages, or books.

- Ask for sources. Say, "Give me that answer with links to check."

- Avoid sensitive topics. Skip medical, legal, or financial advice. Talk to real experts instead.

- Turn on privacy settings. Microsoft lets you control data use.

- Update regularly. New versions fix bugs fast.

- Combine tools. Use Copilot plus your own knowledge or other apps.

Following these keeps the fun without the risk.

What Happens Next For Copilot And AI Disclaimers?

Microsoft promises an update soon. When it drops, expect clearer language that matches today's Copilot – more like a helpful colleague than pure entertainment.

Bigger picture? This story reminds us AI is powerful but not magic. Regulators worldwide watch closely. Europe has strict AI laws. The U.S. debates rules too. Companies will likely keep warnings but make them friendlier as tech improves.

In the meantime, millions keep chatting with Copilot daily. The buzz might even make people more careful – which is a good thing.

Wrapping Up: Fun Tool Or Serious Risk? You Decide

Microsoft's "entertainment purposes only" line for Copilot AI isn't a full U-turn on their product. It's a honest reminder that AI still needs human smarts beside it. As of April 6, 2026, the terms stand, but change is coming.

Next time you fire up Copilot, remember those words. Laugh at the funny answers, brainstorm wild ideas, but double-check the important stuff. That's how you stay safe and smart in the AI age.

What do you think – should Microsoft update the warning faster? Drop your thoughts in the comments. And share this article if it helped you understand the buzz!